Key takeaways

-

Traditional activity-based metrics often fail to distinguish between high-volume effort and actual results. Throughput offers a clearer view of performance by accounting for decision quality and the health of the case backlog.

-

By focusing on net movement rather than gross closures, a throughput-based approach discourages low-quality work and rewards employees for getting tasks right the first time.

-

Unlike metrics that fluctuate with inbound volume, throughput encourages consistent productivity. It incentivizes teams to process new assignments during surges and resolve backlogs during slower periods.

One of the most persistent challenges in inventory-driven back-office and operational environments is answering a simple question: How do we measure productivity fairly and meaningfully while driving positive business outcomes? In our experience, incorporating employee Throughput alongside traditional metrics can unlock significant benefits, including greater visibility into team productivity, improved work quality, and more effective inventory management.

The reality of inventory-driven work

In many operational settings, particularly in insurance claims, mortgage processing, underwriting, and other case-based functions, employees typically do not process work straight through from start to finish in a single touch. Instead, employees own a portfolio of open cases that require time, multiple interactions, and ongoing management. Files may be returned for reprocessing due to new information surfacing weeks later or quality issues during initial execution. Cases can also be reassigned across team members as workloads shift, and inbound volume may spike or slow depending on external conditions.

In Property and Casualty claims, for example, an adjuster may carry dozens of open residential damage claims. A file may close after an initial payment, only to reopen later due to supplemental damage or documentation gaps. Similarly, in mortgage processing, an underwriter may clear a file for the next stage, only to have it returned because a document was incomplete or an undisclosed liability was identified. This dynamic setting poses a challenge for effectively measuring employee productivity.

In our experience, clients have typically relied on a few common approaches to measure performance:

- Activity-based metrics: Measures how frequently an associate completes tasks within a workflow. While these metrics effectively capture volume of work, they provide limited insight into how productive that effort truly is.

- First closes: Measures the number of files completed for the first time and advanced to the next stage. While this reflects initial productivity, first closes are blind to rework and can discourage working on reopened files

- Total closes: Measures the total number of files completed, including those that were reopened. While more comprehensive, total closes reward all closures equally, including those where extra work could have been avoided

These approaches are also susceptible to being “gamed”, as employees may temporarily inflate numbers through quick, low-quality closures to meet targets.

Measuring throughput introduces a different dimension. It considers not only how many files are being closed, but also how effectively an individual is managing their inventory. In its simplest terms, throughput over a given timeframe can be defined as:

Net new files assigned: The fresh workload added to an individual’s desk during the period

Change in total pending inventory: The net difference between the number of open files at the end of the period vs the beginning of the period

3 key benefits of throughput

1. Clear View of True Productivity

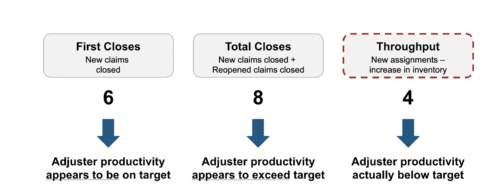

Imagine a P&C claims adjuster starts the week with 40 open claims and has a target of completing 6 claims. During the week, they receive 10 new claims, and 4 previously closed claims reopen due to errors in the initial closure. The adjuster progresses 6 of the new claims to first closure and closes 2 of the reopened claims.

By the end of the week, the adjuster’s inventory has increased to 46 open claims (starting inventory (40) + new claims assigned (10) + claims reopened (4) – claims closed (8))

Let’s compare how the adjuster performed under:

Throughput captures the combined impact of decision quality, rework created or avoided, and strong inventory management. As a result, leaders and managers gain clearer visibility into performance. Inventory growth becomes noticeable before it becomes problematic, and patterns of recurring rework become clearer.

2. Drives Higher Quality Work and Reduces Avoidable Rework

Consider a residential water damage claim that is closed without complete documentation. Weeks later, the file reopens because key information was missing. The adjuster must review the claim again, gather additional documentation, and reclose the file. The adjuster records activity twice, but the second round of work exists only because the first was not done thoroughly.

Under traditional productivity measures, this looks like additional work completed and may be rewarded as multiple closures. Under a throughput lens, it becomes visible as a reopening that negatively impacted productivity.

Of course, not all rework is avoidable. In many operational environments, new information emerges or legitimate changes occur that require additional review. The goal is not to eliminate all rework, but to minimize avoidable rework, the kind created by incomplete or low-quality initial execution.

By focusing on net movement instead of gross activity, throughput discourages churn and reinforces the importance of getting work right the first time.

3, Encourages Strong and Disciplined Inventory Management

Operational environments are typically dynamic, with fluctuations in incoming volume. In P&C claims, for example, a major storm can generate thousands of water damage claims within days, creating a sudden surge in new assignments. At other times, inbound volume slows, and team capacity temporarily exceeds new work.

Traditional measures can be misleading in volatile environments. “First Closes” are highly dependent on inbound volume, leading to significant fluctuations in perceived productivity with demand. While total closes offer a more stable measure, they do not account for quality and often reward avoidable rework, ultimately overstating true productivity. Throughput addresses both limitations by measuring net progress, capturing productivity independent of inbound volume, while also reflecting the impact of rework.

In doing so, throughput shifts the focus from short-term activity to disciplined inventory management. By measuring net progress, it encourages employees to maintain consistent productivity regardless of inbound volume. When volume is high, the focus is on progressing new work entering the inventory. During slower periods, attention naturally shifts to resolving existing files and reducing backlog.

While throughput does not eliminate volatility, it provides a clearer view of whether work is truly moving forward. It creates consistent incentives, encouraging employees to work effectively across all conditions, not just when demand is high.

Conclusion

In inventory-driven environments, productivity is not defined by how much activity occurs, but by how productive that activity was. When employees own live inventories of cases or files, performance must reflect the quality of execution, the amount of rework generated, and the health of the backlog, not just the number of tasks completed.

Throughput can be a powerful addition to traditional KPIs. It helps managers distinguish between effort and productive progress, encourages higher-quality initial execution by making rework visible, and is difficult for employees to “game” because poor-quality work inevitably returns as rework.

Throughput offers a more complete answer. And in complex operational environments, that is what ultimately drives sustainable performance.

If your organization operates in a similarly complex environment and is looking to improve how productivity is measured and managed, we would be happy to discuss how our experienced team can help design and implement a throughput-based approach to drive sustainable performance.

Frequently asked questions

1. How is throughput calculated in an inventory-driven environment?

Throughput is determined by taking the Net New Files Assigned (the workload added during a specific period) and subtracting the Change in Total Pending Inventory (the difference between open files at the end versus the start of the period).

2. Why are traditional metrics like “Total Closes” sometimes misleading?

“Total Closes” can overstate true productivity because they reward all closures equally, including those resulting from avoidable rework caused by poor initial execution. This can also be “gamed” by employees who prioritize quick, low-quality closures to meet targets.

3. Does a throughput approach aim to eliminate all rework?

No, the goal is not to eliminate all rework, as new information often emerges in complex cases that requires legitimate additional review. Instead, throughput aims to minimize avoidable rework created by incomplete or low-quality work.